AI Undress Video: What Everyone Should Know About Digital Manipulation And Its Real Impact

The digital world, you know, it's almost always changing, and with it, new kinds of technology pop up that, well, they really make us think. One of these, that's been getting a lot of chatter lately, is the idea of "AI undress video." This isn't just about some clever photo editing; it's a whole different thing, actually, using smart computer programs to make it seem like someone is without clothes in a video, even if they never were. This kind of tech, it raises a lot of questions, doesn't it? About privacy, about what's real and what's not, and about how we treat each other online. We're going to talk about what this is, why it matters, and what we can all do to be a bit safer in this fast-moving digital space.

You see, the tools that make "AI undress video" possible, they are a sort of offshoot from other artificial intelligence projects. It's like, some of the same basic ideas that help researchers design millions of possible compounds for medicine, or train really smart learning models for tricky tasks, are also behind these more troubling applications. So, it's not that the AI itself is bad, but rather how some people choose to use it, which is a bit of a problem. It’s a very stark reminder that, as one president of a university once put it, AI needs to be "developed with wisdom," a point that feels particularly true here.

Our digital lives are pretty much intertwined with AI these days, aren't they? From the helpful assistants on our phones to the complex systems that manage big data, AI is everywhere. But with great technological ability, there comes, you know, a great responsibility, too. The discussions around "AI undress video" are really about this responsibility. It’s about making sure that as these powerful tools grow, we also grow our understanding of their potential downsides and, arguably, how to stop them from causing harm. This is a very important conversation for all of us to have, actually.

Table of Contents

- What is AI Undress Video and How Does It Work?

- The Real Impact on Individuals and Society

- Identifying and Addressing AI-Generated Manipulation

- The Ethical Dilemma and the Future of AI

- Frequently Asked Questions About AI Undress Video

What is AI Undress Video and How Does It Work?

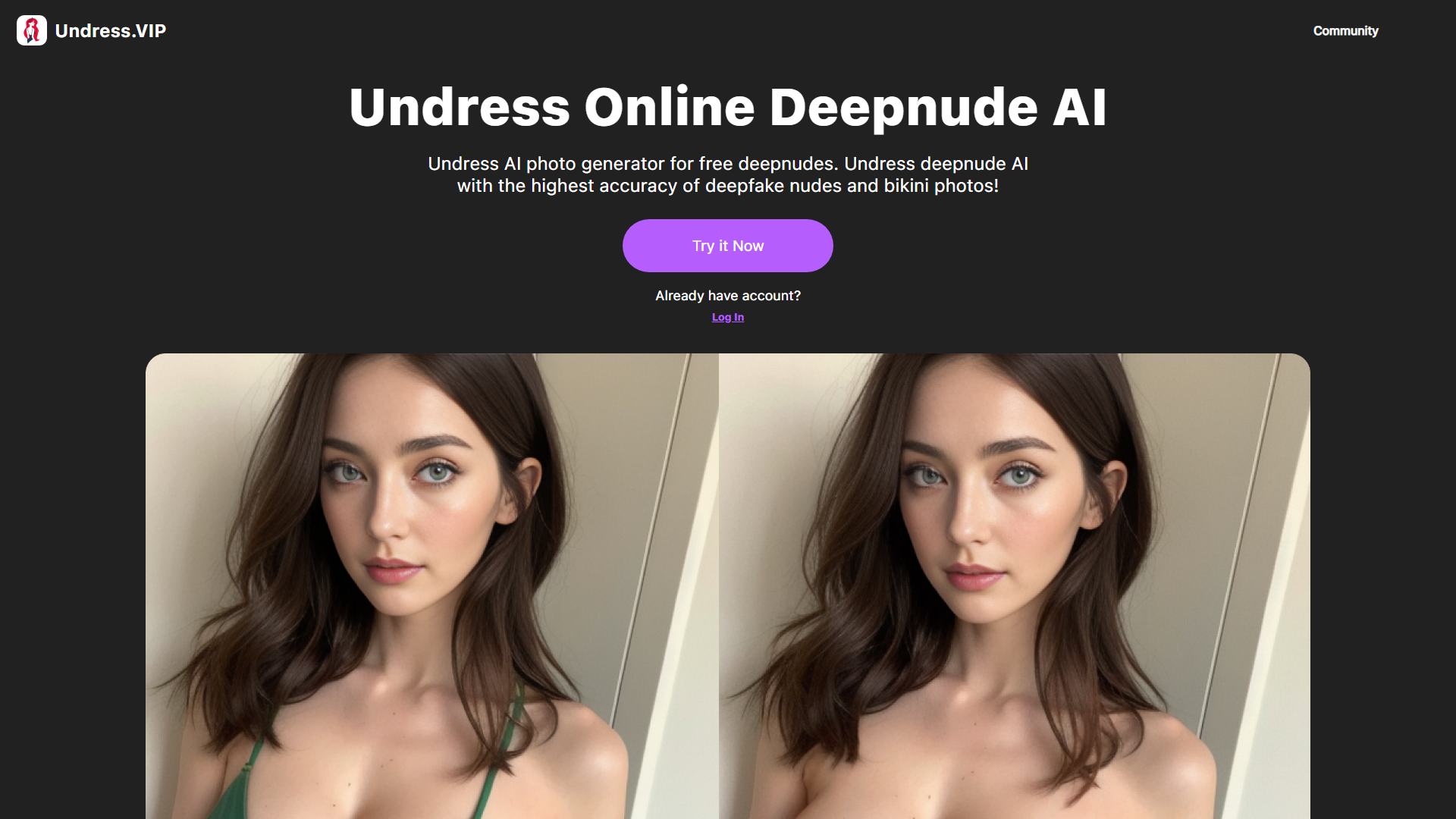

So, what exactly are we talking about when we say "AI undress video"? Well, it's a kind of digital trickery, you know, where artificial intelligence programs are used to alter videos. These programs can, in a way, remove clothing from people in existing footage, making it look like they are nude. This is done without their actual participation or, obviously, their permission. It's a pretty disturbing application of technology, if you ask me, and it really highlights some of the less thoughtful uses of AI that are out there.

The Technology Behind It

The core technology behind these videos often involves something called "deepfake" tech, or generative AI, in some respects. These are algorithms that, you know, learn from vast amounts of data. They can then create new images or videos that look very real. For "undress" videos, the AI is trained to understand human anatomy and how clothing typically covers it. Then, it can, more or less, "fill in" what it predicts would be underneath. It’s a very advanced form of image synthesis, actually, and it's getting more convincing all the time, which is a bit concerning.

Researchers at places like MIT, they actually work with generative AI algorithms for really positive things, like designing millions of potential new medicines. That's a very different use, isn't it? But the underlying idea of an AI creating something new, based on what it's learned, is similar. The difference is in the intent and the ethical boundaries that are, well, completely ignored in the case of "AI undress video." It's a stark contrast, you know, between using AI for good and using it for harm, and that's something we should all be aware of.

Why This is a Growing Concern

The concern around "AI undress video" is, frankly, growing quite a bit. This is partly because the tools to create these videos are becoming more accessible. What once required a lot of technical skill and powerful computers can now, sometimes, be done with simpler software or even online services. This ease of access means more people can create and, sadly, share such content. It's a very worrying trend, actually, because it lowers the barrier for causing significant harm to individuals, and that's a big problem.

Also, the quality of these manipulated videos is improving, so it's almost harder and harder to tell what's real and what's fake. This makes it a serious threat to personal privacy and reputation. When you can't trust what you see, you know, it shakes the foundations of how we interact online. This is why discussions about AI ethics, like those raised by leaders at places like Howard University, are so important. They emphasize that AI should be "developed with wisdom," which, in this context, really means with a strong moral compass.

The Real Impact on Individuals and Society

The existence of "AI undress video" has some very real and, frankly, devastating consequences. It's not just a technical issue; it's a human one. The impact can spread far beyond the individual targeted, affecting their loved ones and, in a way, society's trust in digital media as a whole. It’s a bit like a ripple effect, actually, where one harmful act can have many unforeseen consequences.

Privacy and Consent Issues

At its core, "AI undress video" is a massive violation of privacy and, arguably, a complete disregard for consent. Someone's image is taken and altered without their permission, often to create sexually explicit content. This is a profound breach of their personal space and dignity. It's like, someone is taking a part of you and twisting it into something it isn't, and that's just not right. The fundamental right to control one's own image and how it's used is, you know, totally undermined here.

This kind of digital manipulation, it also creates a culture where people might feel less safe sharing their images online, even innocent ones. If a picture of you, fully clothed, can be turned into something else, it makes you think twice, doesn't it? This erosion of trust is a very serious matter. It highlights the urgent need for digital literacy and, perhaps, stronger legal protections for individuals in the face of such advanced AI misuse, as a matter of fact.

The Spread of Misinformation and Disinformation

Beyond the direct harm to individuals, "AI undress video" contributes to a broader problem of misinformation and disinformation. When fake videos look incredibly real, it becomes harder for people to distinguish truth from fiction. This can, in some respects, erode public trust in media and information sources. It’s a very slippery slope, actually, when you can't be sure if what you're seeing is genuine.

This challenge is not just about these specific videos; it's about the general ability of AI to create believable but false narratives. This means we all need to be, you know, more critical consumers of digital content. We need to question what we see and hear, and not just accept it at face value. It's a bit like a constant vigilance is required, which can be tiring, but it's pretty important, frankly.

Emotional and Psychological Toll

For the people who are targeted by "AI undress video," the emotional and psychological damage can be, well, immense. Imagine having your image manipulated and spread without your consent, especially in such a personal and violating way. It can lead to feelings of shame, humiliation, anger, and deep distress. It's a very personal attack, actually, and the consequences can last a long time.

Victims might experience anxiety, depression, or even social withdrawal. Their relationships could suffer, and their professional lives might be affected. The feeling of being violated, of having something so intimate stolen and twisted, is profound. This is why, you know, it's so important to understand the human cost of this technology and to support those who have been affected. It's not just a digital problem; it's a very real human tragedy for many.

Identifying and Addressing AI-Generated Manipulation

So, given the rise of "AI undress video" and other deepfake content, a pretty important question is: how can we tell what's real and what's not? And what can we do about it? It’s a challenge, yes, but there are steps we can take, actually, to be more aware and to help combat this misuse of technology.

Signs to Look For

While AI-generated content is getting better, there are often still some subtle clues that something might be fake. For instance, look for unusual blurring around the edges of a person, or strange flickering. Sometimes, facial expressions might seem a bit off, or they don't quite match the voice. The lighting in a scene might be inconsistent, too. Pay attention to details like hair, skin texture, and even how people blink – sometimes AI struggles with natural eye movements. It’s about, you know, really observing the small things that might not seem quite right.

Also, check the source of the video. Is it from a reputable news outlet or a verified social media account? If it's shared widely by unknown sources, or if the accompanying story seems sensational, that's a bit of a red flag. Context is, frankly, very important here. A team of MIT researchers, they actually founded Themis AI to quantify artificial intelligence model uncertainty and address knowledge gaps, which is pretty relevant, you know, to the idea of spotting when an AI might be creating something that isn't quite right or has gaps in its "knowledge" of reality.

What You Can Do to Protect Yourself

Protecting yourself in this digital landscape involves several steps. First, be careful about what images and videos you share online, and who you share them with. Once something is out there, it’s, you know, very hard to control. Consider your privacy settings on social media platforms. Make them as strict as you feel comfortable with, as a matter of fact. It’s a good idea to limit who can see your personal content.

If you come across "AI undress video" or any other manipulated content, do not share it. Sharing it only contributes to the harm. Instead, report it to the platform where you found it. Most social media sites and video hosting services have policies against non-consensual explicit content and deepfakes. Learn more about digital privacy on our site, and also, you might want to link to this page understanding online consent for more information. It's a collective effort, you know, to keep the internet a safer place for everyone.

Advocating for Responsible AI Development

Beyond personal actions, there's a bigger picture: advocating for AI to be developed and used responsibly. This means supporting organizations and policies that prioritize ethical AI. It’s about encouraging tech companies to build safeguards into their products and to consider the societal impact of their innovations. Like Ben Vinson III, president of Howard University, he made a very compelling call for AI to be "developed with wisdom." This is, you know, a principle that really needs to guide all AI creation, especially when it touches on sensitive areas.

We need to push for more research into AI detection tools, too. Just as AI can create these fakes, other AI can, arguably, be developed to spot them. This is a bit of a technological arms race, but it’s a necessary one. Supporting efforts that focus on ethical guidelines and transparency in AI development is, frankly, crucial for the future. It’s about ensuring that the good uses of AI, like those from MIT researchers developing more reliable learning models, outweigh the harmful ones.

The Ethical Dilemma and the Future of AI

The existence of "AI undress video" presents a pretty stark ethical dilemma for the entire field of artificial intelligence. It forces us to confront the question of how far is too far, and what kinds of applications we, as a society, are willing to tolerate. It’s a very complex issue, actually, with no easy answers, but it's one we absolutely need to discuss openly.

Balancing Innovation with Safety

There's always a tension between encouraging innovation and ensuring public safety, isn't there? AI is a powerful tool with the potential to solve some of the world's biggest problems, from health to climate change. We don't want to stifle that progress. However, as we've seen with "AI undress video," unchecked innovation can lead to significant harm. So, it's about finding that sweet spot, you know, where new tech can flourish but with strong ethical guardrails in place.

This means having ongoing conversations between technologists, ethicists, policymakers, and the public. It means that companies developing AI have a responsibility to consider the potential for misuse and to build in protections. It’s a bit like building a bridge; you want it to be innovative, but you also want it to be very safe for everyone who uses it. This AI assistant is risk, in a way, and we need to manage that risk responsibly.

The Role of Policy and Education

Policy and education are, frankly, two of our strongest tools in addressing the challenges posed by "AI undress video." Governments around the world are starting to look at laws specifically targeting deepfakes and non-consensual explicit content. These laws can provide legal recourse for victims and deter perpetrators. It’s a very important step, actually, in holding people accountable for their actions in the digital space.

Education is just as vital. Teaching digital literacy from a young age, helping people understand how AI works, and fostering critical thinking skills are, you know, essential. If people are more aware of the risks and how to spot manipulated content, they are less likely to be fooled or to inadvertently spread harmful material. It’s about empowering everyone to navigate the digital world with greater confidence and, arguably, greater safety. This is a collective learning process for all of us.

Frequently Asked Questions About AI Undress Video

People often have a lot of questions about this topic, and that's understandable, you know, given how new and concerning it is. Here are a few common ones that come up.

Is AI undress video legal?

The legality of "AI undress video" can, in some respects, vary by region, but generally, creating or sharing non-consensual explicit content, even if it's AI-generated, is considered illegal in many places. It often falls under laws related to revenge porn, harassment, or image-based sexual abuse. It's a very serious offense, actually, with significant legal consequences for those involved.

How does AI undress video work?

Basically, these videos use generative AI algorithms, a bit like those used for other creative AI tasks. The AI is trained on many images to learn how bodies look and how clothing covers them. Then, it can, more or less, apply this learning to an existing video of a person, digitally removing their clothes and filling in the perceived nudity. It's a very complex process that, you know, relies on advanced computer vision and machine learning techniques.

What are the dangers of AI undress videos?

The dangers are, frankly, pretty significant. They include severe violations of privacy, psychological harm to the targeted individuals, damage to reputations, and the spread of misinformation. These videos can also contribute to a culture of online harassment and abuse. It’s a very real threat to personal safety and dignity in the digital age, actually, and that's why we need to be so vigilant.

Nectar AI Companion - Build the Perfect AI Girlfriend

Undress AI - Create Deepnude Images with AI | Creati.ai

Unleash Your Creativity With Undress AI App - AI Art Generator